Why Persistent Testing Is The Key To Software Reliability

In the hyper-competitive digital landscape of 2026, software is no longer just a tool; it is the lifeblood of global commerce, communication, and infrastructure. As systems grow increasingly complex, incorporating AI-driven microservices and decentralized architectures, the margin for error has vanished. Software reliability—the probability that a system will perform its required functions under stated conditions for a specified period—has become the ultimate competitive advantage.

Many development teams still treat testing as a final checkpoint before deployment. However, the most successful organizations have shifted toward persistent testing, a methodology that treats software quality assurance (SQA) as a continuous, lifecycle-wide commitment. In this article, we explore why persistent testing is the key to software reliability, detailing why constant, repetitive, and rigorous continuous testing is the only path to building software that doesn’t just work, but stays working.

The Paradigm Shift: From Periodic to Persistent Testing

Historically, testing was a siloed phase occurring at the end of the development cycle. In 2026, this approach is considered a liability, highlighting why persistent testing is the key to software reliability. Persistent testing integrates automated, ongoing validation into every stage of the Software Development Lifecycle (SDLC). By embedding reliability checks into the CI/CD pipeline, teams can achieve early defect detection the moment they are introduced, fostering a true DevOps culture.

The fundamental difference between functional testing and reliability testing lies in duration and consistency. While functional testing checks if a feature works once, reliability testing confirms it works consistently over time under various environmental stressors. This consistent approach is precisely why persistent testing is the key to software reliability, ensuring that as your codebase evolves, the system’s stability remains intact and contributes to high user satisfaction.

Why Short-Term Testing Fails

The “Flaky Test” Syndrome: Testing only at the end leads to massive, monolithic patches that are prone to errors, hindering defect prevention efforts.

Performance Degradation: Without ongoing load testing, small inefficiencies accumulate, leading to “code rot” that only reveals itself during peak usage.

Technical Debt: Fixing bugs discovered in production is statistically 10 to 100 times more expensive than catching them during the development phase. This financial burden clearly demonstrates why persistent testing is the key to software reliability.

Decoding Reliability: MTBF and MTTR in 2026

Why persistent testing is the key to software reliability can be measured through two core metrics: Mean Time Between Failures (MTBF) and Mean Time To Repair (MTTR). These metrics act as the pulse of your software’s health.

MTBF measures the average time elapsed between inherent failures of a system during normal operation. A high MTBF indicates a robust, reliable system. This proactive approach is precisely why persistent testing is the key to software reliability, as it works to increase this number by simulating long-duration workloads, effectively “stressing” the system to find memory leaks or resource exhaustion before they impact real users, thereby achieving significant risk mitigation.

Conversely, MTTR measures how quickly your team can recover from a failure. Persistent testing isn’t just about preventing crashes; it’s about resilience engineering. By automating failure recovery testing (Chaos Engineering), teams can ensure that when a failure does occur, the impact is minimized and the recovery is near-instant.

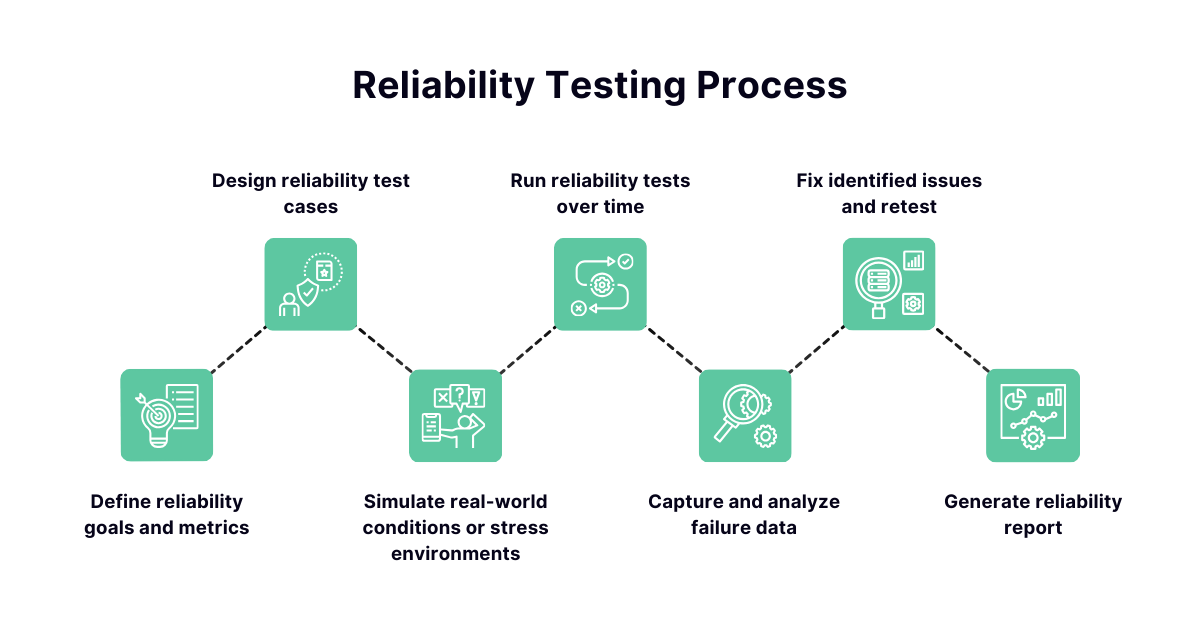

Building a Robust Reliability Test System

To truly understand why persistent testing is the key to software reliability, one must recognize that building a system that stays working requires more than just a set of scripts; it requires a reliability-first culture. In 2026, this means adopting a layered performance testing strategy that covers various dimensions of software behavior.

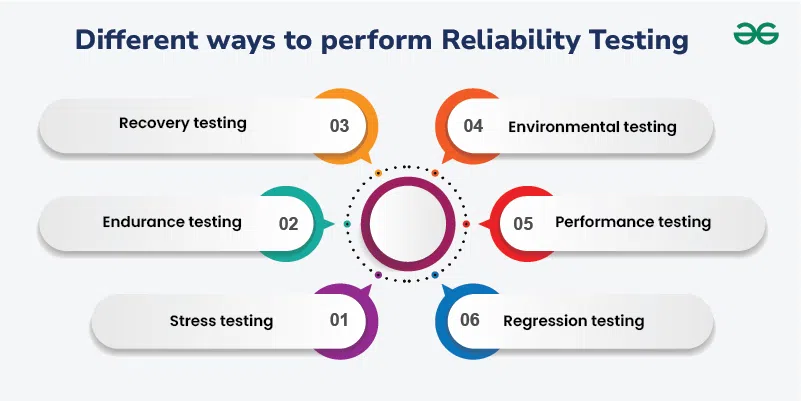

1. Stress Testing

Stress testing pushes your software beyond its normal operational capacity. By subjecting your application to extreme workloads, you can identify the “breaking point.” Persistent stress testing helps developers understand how the system fails, allowing for the implementation of graceful degradation protocols.

2. Load Testing

Unlike stress testing, load testing evaluates how the system performs under expected peak usage. With the rise of global, high-concurrency applications, load testing must be run continuously against staging environments that mirror production traffic patterns.

3. Regression Testing

As codebases change, previous functionality often breaks. Automated regression testing acts as a safety net. By running comprehensive suites every time a commit is pushed, you ensure that new features do not compromise existing stability and maintain high test coverage across the application.

The Financial and Operational Impact of Reliability

The financial and operational benefits clearly demonstrate why persistent testing is the key to software reliability. Reliability is not just a technical goal; it is a business imperative. In 2026, downtime is measured in lost revenue, eroded trust, and regulatory fines. Companies that prioritize persistent testing see significant improvements in their bottom line.

Reduced Customer Churn: Users are increasingly intolerant of buggy software. Reliability is the #1 factor in user retention, directly contributing to user satisfaction. This direct impact on user retention is a clear illustration of why persistent testing is the key to software reliability.

Enhanced Developer Productivity: When testing is persistent and automated, developers spend less time “firefighting” production bugs and more time innovating.

Compliance and Security: Many industries now require proof of reliability. Consistent testing logs provide the audit trail necessary to meet modern security standards.

Integrating AI into Persistent Testing

By 2026, AI has revolutionized how we approach testing. AI-driven testing tools can now predict where a failure is likely to occur based on historical code patterns. These tools can automatically generate test cases for edge scenarios that a human developer might overlook. This advanced capability further solidifies why persistent testing is the key to software reliability in the age of AI, often leveraging sophisticated test automation frameworks.

Furthermore, self-healing test scripts are becoming the standard. When a UI element changes slightly, AI-powered frameworks can adapt the test script in real-time, preventing the “false positives” that plague traditional automation. This allows persistent testing to scale without requiring a massive army of QA engineers.

Best Practices for Implementing a Persistent Testing Culture

If you want to transition your team to a persistent testing model, follow these actionable steps:

- Shift Left: Begin testing at the design phase. Use Test-Driven Development (TDD) to ensure code is testable from line one.

- Automate Everything: If a test can be written, it should be automated. Manual testing should be reserved for exploratory scenarios, not verification. Implementing robust test automation frameworks is crucial here.

- Create Production-Like Environments: Reliability testing is only as good as the environment it runs in. Use Infrastructure-as-Code (IaC) to ensure your test environments are carbon copies of your production setup.

- Monitor and Iterate: Use observability tools to track system performance in real-time. Feed this data back into your testing suite to create a closed-loop feedback system.

- Foster Accountability: Make quality a shared responsibility. When developers own the reliability of their code, the quality of the entire product improves exponentially.

The Future of Software Reliability

As we look toward the latter half of the decade, the demand for “always-on” software will only increase. With the integration of quantum computing and edge-based AI, the complexity of our systems will reach unprecedented levels. This future landscape underscores more than ever why persistent testing is the key to software reliability. Persistent testing will evolve from a best practice into an existential requirement, driving continuous software quality assurance (SQA) efforts.

Organizations that view testing as a continuous process will thrive, while those that continue to treat it as a bottleneck will be left behind. Reliability is not a destination; it is a journey of constant refinement, measurement, and improvement. By embracing the principles of persistent testing, you are building the foundation for a resilient, scalable, and high-performing digital future.

Conclusion

The evidence is clear: why persistent testing is the key to software reliability is demonstrated by its role as the single most important factor in ensuring software reliability in 2026. By shifting from reactive, periodic testing to a proactive, continuous model, teams can drastically reduce downtime, lower maintenance costs, and deliver a superior experience to their users.

Remember, software reliability is not about perfection; it is about predictability. It is about knowing how your system behaves under pressure and having the confidence that it will continue to serve its purpose, no matter the circumstances. Start implementing your persistent testing strategy today, and transform your development process from a cycle of constant fixes into a streamlined engine of innovation.